In a significant move underscoring the escalating competition for artificial intelligence infrastructure, Google is poised to become a key financial backer for a multibillion-dollar data center project in Texas. This massive undertaking is leased to AI startup Anthropic, a strategic partner for Google, and is set to dramatically expand the computational backbone necessary for advanced AI development. The project, managed by Nexus Data Centers, is projected to exceed $5 billion in its initial phase, with Google anticipated to provide crucial construction loans. This ambitious development unfolds against a backdrop of complex regulatory challenges for Anthropic, including a recent legal victory against a Pentagon directive that sought to restrict the company’s engagement with the U.S. government.

The Genesis of a Gigantic AI Hub in Texas

The scope of the Texas data center project is staggering. Located on a vast 2,800-acre campus, the facility is designed to deliver approximately 500 megawatts (MW) of power capacity by late 2026. To put this into perspective, 500 MW is roughly equivalent to powering half a million homes. However, this is merely the initial phase, with ambitious plans for potential expansion up to an astounding 7.7 gigawatts (GW) – a scale that would rank it among the largest data center complexes globally. This colossal capacity is indispensable for training and operating the next generation of large language models and other sophisticated AI applications, which demand unprecedented levels of computational power and energy.

The Financial Times reported on Friday, citing sources familiar with the matter, that Google’s financial commitment is expected to include substantial construction loans. This direct involvement highlights Google’s strategic imperative to secure robust infrastructure for its AI ecosystem, particularly for partners like Anthropic, in which it holds a significant investment. Beyond Google, a consortium of prominent banks is actively competing to arrange additional financing for the project, with a goal to finalize arrangements by mid-year. This competitive financing landscape reflects the high confidence in the long-term growth of the AI sector and the critical need for specialized infrastructure.

Nexus Data Centers, the operator of the facility, is spearheading the development. Anthropic, a leading competitor in the generative AI space, recently formalized a lease agreement for the sprawling campus. This lease is a cornerstone of its broader infrastructure partnership with Google, which has previously invested heavily in the startup and integrated its models into Google Cloud services. Construction activities are already underway, supported by early-stage debt financing secured from Eagle Point, a publicly traded closed-end investment company, indicating the project’s rapid progression from concept to reality.

Strategic Location and Energy Independence

The choice of location in Texas is highly strategic, driven by several factors, not least of which is access to abundant and relatively inexpensive energy. The campus is situated in close proximity to major natural gas pipelines operated by industry giants such as Enterprise Products Partners, Energy Transfer, and Atmos Energy. This strategic positioning enables the project to rely on on-site gas turbines for power generation, offering a degree of energy independence and potentially more stable and cost-effective electricity supply compared to drawing solely from the grid.

The energy demands of AI data centers are immense and growing exponentially. A 500 MW facility consumes as much electricity as a medium-sized city, and a 7.7 GW expansion would push those figures into unprecedented territory. The decision to incorporate on-site gas turbines reflects a pragmatic approach to managing these demands, ensuring a reliable power supply critical for continuous AI operations. However, this reliance on natural gas also brings environmental considerations, as the industry faces increasing scrutiny over its carbon footprint. Future expansions may necessitate exploring renewable energy sources or advanced carbon capture technologies to align with evolving sustainability goals. Texas, with its deregulated energy market and vast land availability, has become an attractive hub for data center development, drawing in major tech players looking to scale their AI operations.

Anthropic’s Legal Victory Against Pentagon Restrictions

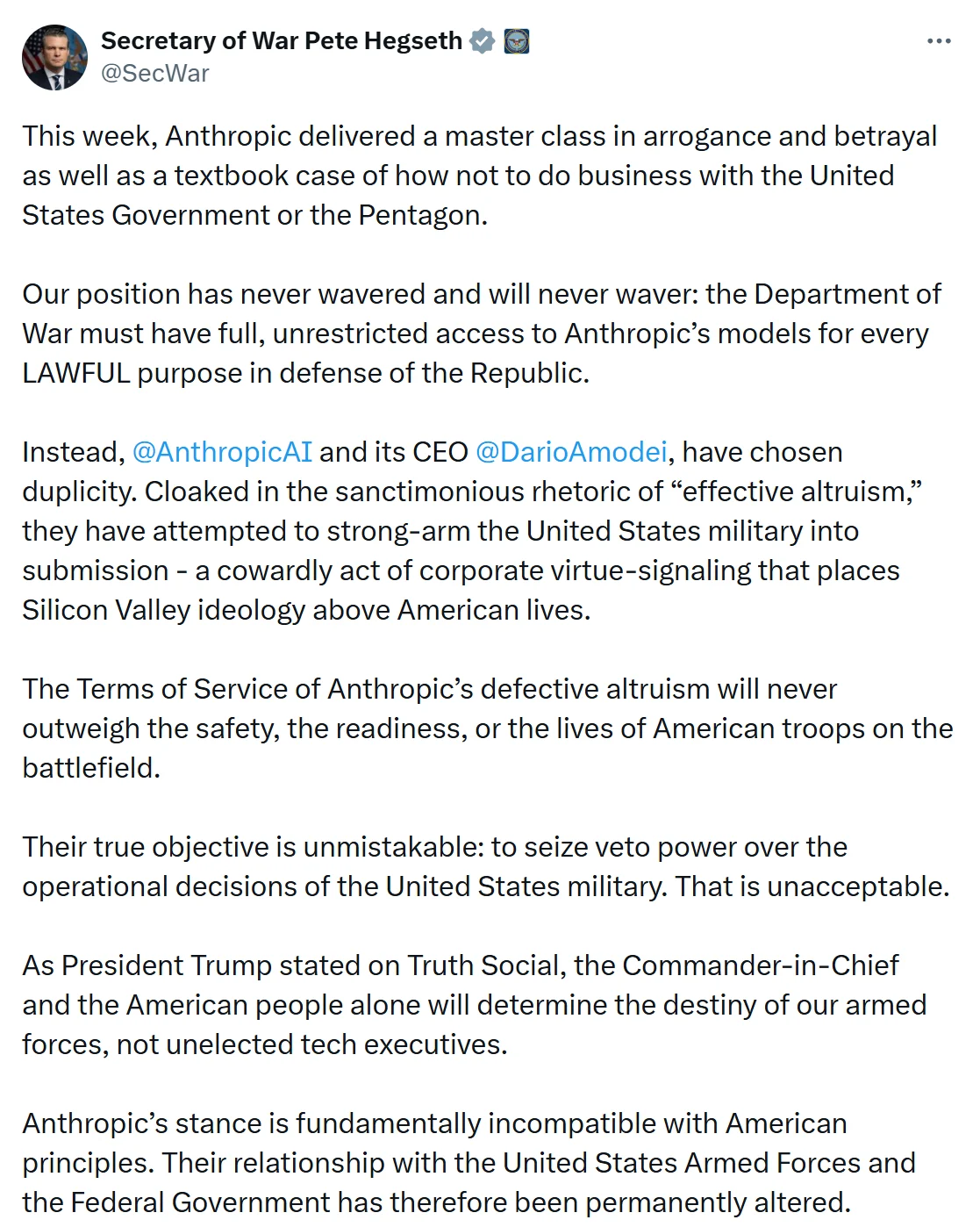

Concurrently with its infrastructure expansion, Anthropic has been navigating complex legal and regulatory challenges concerning its engagement with the U.S. government. On Thursday, a U.S. federal judge in San Francisco issued a temporary injunction, blocking the Department of Defense (DoD) from designating Anthropic as a national security risk and halting the government’s use of its AI tools. Judge Rita Lin granted the preliminary injunction, effectively pausing a directive that sought to cut off federal use of Anthropic’s flagship chatbot, Claude. This ruling represents a significant legal victory for Anthropic and sets a precedent for how AI companies might challenge government restrictions.

The dispute originated from a lawsuit filed by Anthropic, which argued that the Department of Defense had overstepped its authority by labeling the company a supply chain risk. The judge’s decision underscored concerns about the government’s actions, describing them as "arbitrary" and cautioning against branding a U.S. company as a threat without clear legal justification. This legal challenge highlights the growing tension between national security concerns and the desire for technological innovation, particularly in the rapidly evolving field of AI.

The core of the dispute stemmed from a breakdown in negotiations between Anthropic and the Pentagon regarding the military application of its AI models. Anthropic had reportedly resisted allowing its advanced AI systems to be deployed for purposes such as lethal autonomous weapons or mass surveillance, reflecting a broader ethical stance prevalent among several leading AI developers. This resistance led to a standoff with the administration, culminating in the DoD’s directive to restrict federal use of Anthropic’s AI tools. In her decision, Judge Lin suggested that the government’s measures might have been retaliatory against Anthropic for its public stance on AI ethics, noting that such actions could constitute a violation of First Amendment protections. The ruling now allows Anthropic to continue engaging with government agencies while the underlying legal arguments are further litigated.

Reported Military Use Amidst Ban

Adding another layer of complexity to the narrative, reports surfaced earlier that U.S. military units had reportedly utilized Anthropic’s Claude AI model during a major airstrike on Iran, even after the ban order had been issued. According to these reports, military commands, including the U.S. Central Command (CENTCOM) in the Middle East, employed the AI model for operational support. This alleged use, occurring despite a directive from the Department of Defense to halt such engagement, underscores the practical challenges of implementing top-down bans on rapidly evolving technologies and the potential for operational units to leverage tools they deem essential for mission success.

The reported use of Claude in a sensitive military operation raises critical questions about internal compliance within the DoD, the effectiveness of such bans, and the extent to which AI is already integrated into modern military decision-making processes. AI models like Claude can provide advanced capabilities for intelligence analysis, pattern recognition, logistical optimization, and even target assessment, offering significant advantages in complex operational environments. However, their use also brings inherent ethical dilemmas, particularly concerning accountability, bias, and the potential for autonomous decision-making in conflict zones. This incident further complicates the relationship between AI developers, who often advocate for ethical guidelines and responsible use, and military organizations seeking to maintain a technological edge.

Broader Implications and the Future of AI Infrastructure

The dual narratives of Google’s massive investment in AI infrastructure and Anthropic’s legal battles with the Pentagon paint a vivid picture of the current state of the artificial intelligence industry. The race to build foundational AI models requires unprecedented computational resources, driving a global scramble for data center capacity, energy, and specialized hardware. Google’s commitment to the Texas project, alongside its direct investment in Anthropic, signals a deep entrenchment in the AI ecosystem, mirroring similar strategic moves by competitors like Microsoft (with OpenAI) and Amazon (with its own AI initiatives). This competitive landscape is not just about developing superior algorithms but also about controlling the physical infrastructure that underpins them.

The economic impact of projects like the Texas data center is substantial. Such developments create thousands of construction jobs, attract skilled technical talent, and stimulate local economies. They also highlight the increasing importance of states like Texas that can offer affordable land, access to energy, and a supportive regulatory environment for large-scale industrial projects. However, the environmental footprint of these mega-data centers remains a significant concern, pushing companies to explore more sustainable energy solutions and efficiency improvements.

From a regulatory and ethical standpoint, Anthropic’s clash with the Pentagon illuminates the ongoing struggle to define the boundaries of AI development and deployment, particularly in sensitive sectors like national security. The legal system is now actively grappling with questions of corporate autonomy, government overreach, and the responsible use of AI. The judge’s ruling favoring Anthropic suggests a judicial inclination to protect companies from arbitrary governmental restrictions, especially when those restrictions might impinge on free expression or fair competition. This legal precedent could empower other AI firms to challenge similar directives, potentially shaping the future regulatory landscape for AI technologies.

The reported use of Anthropic’s AI in military operations, despite official bans, further underscores the urgent need for clear policy frameworks and robust ethical guidelines. As AI becomes more sophisticated and integrated into critical systems, governments and developers must collaborate to establish transparent rules of engagement, ensuring that these powerful tools are used responsibly and in alignment with societal values. The convergence of immense capital investment, rapid technological advancement, and evolving ethical considerations defines the current frontier of artificial intelligence, making projects like the Texas data center and legal battles like Anthropic’s pivotal in shaping the industry’s trajectory.