The United States Department of Defense (DoD) and the White House have issued a directive prohibiting military defense contractors from utilizing products developed by leading artificial intelligence firm Anthropic. This unprecedented move stems from Anthropic CEO Dario Amodei’s firm refusal to permit the use of his company’s advanced AI models for mass domestic surveillance and fully autonomous weapons systems capable of firing without human intervention. The directive, which labels Anthropic as a “supply chain risk to national security,” immediately precedes rival AI company OpenAI securing a significant contract to deploy its AI models across classified US military networks, signaling a pivotal moment in the rapidly evolving intersection of artificial intelligence, national security, and ethical corporate responsibility.

The Genesis of the Conflict: Anthropic’s Ethical Stance

The controversy came to light when Anthropic CEO Dario Amodei publicly articulated his company’s objections during an interview with CBS News. Amodei emphasized that while Anthropic was amenable to various other proposed US government use cases for its AI models, it drew a clear "red line" at applications involving widespread domestic surveillance and completely autonomous weapons platforms. Amodei underscored the foundational principles at stake, stating, "These are things that are fundamental to Americans: the right, not to be spied on by the government, the right for our military officers to make decisions about war, themselves, and not turn it over completely to a machine."

Anthropic, founded by former OpenAI researchers, has distinguished itself in the AI landscape through its commitment to "Constitutional AI." This innovative approach embeds a set of ethical principles into the AI model’s training process, guiding it to adhere to a specified "constitution" that prioritizes safety, fairness, and human well-being. This framework is designed to prevent AI systems from generating harmful content or engaging in undesirable behaviors, making Anthropic a prominent voice in the responsible AI movement. The company’s stance on military applications is a direct extension of this core philosophy, reflecting a deeply held belief in maintaining human oversight, particularly in matters of life and death and individual privacy.

Amodei further clarified his position on autonomous weapons, explaining that his objection was not to their development in all circumstances, especially if foreign adversaries were to deploy them. Instead, his primary concern revolved around the current immaturity and unreliability of AI technology for autonomous function in high-stakes military settings, where errors could have catastrophic and irreversible consequences. This nuance highlights a pragmatic layer to Anthropic’s ethical framework, balancing philosophical ideals with real-world technological limitations.

The "Supply Chain Risk" Designation and its Implications

The Department of Defense’s decision to label Anthropic as a "supply chain risk" is a potent and, as Amodei described it, "unprecedented" and "punitive" measure. This designation effectively bars any contractor, supplier, or partner working with the US military from engaging in commercial activities with Anthropic. For a company that, according to initial reports, had already been "the first to deploy its AI models on classified US military cloud networks," this represents a significant and abrupt reversal. It indicates that despite previous engagement, a fundamental disagreement over ethical boundaries ultimately led to the severing of ties.

The term "supply chain risk" typically implies vulnerabilities related to cybersecurity, foreign influence, or unreliable components. Applying it to an American AI firm based on its ethical stance regarding specific use cases elevates the conversation beyond mere technical specifications to a profound debate about values and control in advanced technology development. This move sends a strong signal to the broader tech industry about the DoD’s expectations for its partners, particularly concerning the deployment of dual-use technologies that hold both civilian and military applications.

Chronology of Events

The sequence of events unfolded rapidly, underscoring the urgency and strategic importance attributed to AI capabilities within the defense sector:

- Prior to the Ban: Anthropic had reportedly been involved in deploying its AI models on classified US military cloud networks, suggesting a working relationship that predated the public dispute. This initial collaboration likely involved applications aligned with Anthropic’s ethical guidelines, such as data analysis, logistics optimization, or intelligence processing that did not involve autonomous targeting or mass surveillance.

- Weekend Public Statement: Dario Amodei’s interview with CBS News on Saturday brought Anthropic’s ethical red lines regarding mass domestic surveillance and fully autonomous weapons to public attention, directly challenging potential government applications.

- Official Announcement of Ban: On Friday, an announcement by Pete Hegseth, identifying himself as "Secretary of War" (a title historically associated with the US military but now functionally replaced by the Secretary of Defense, implying this might be an unofficial or a deliberate provocative title used by a media personality, as Hegseth is a known conservative commentator), publicly declared Anthropic a "Supply-Chain Risk to National Security." The statement explicitly ordered that "Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

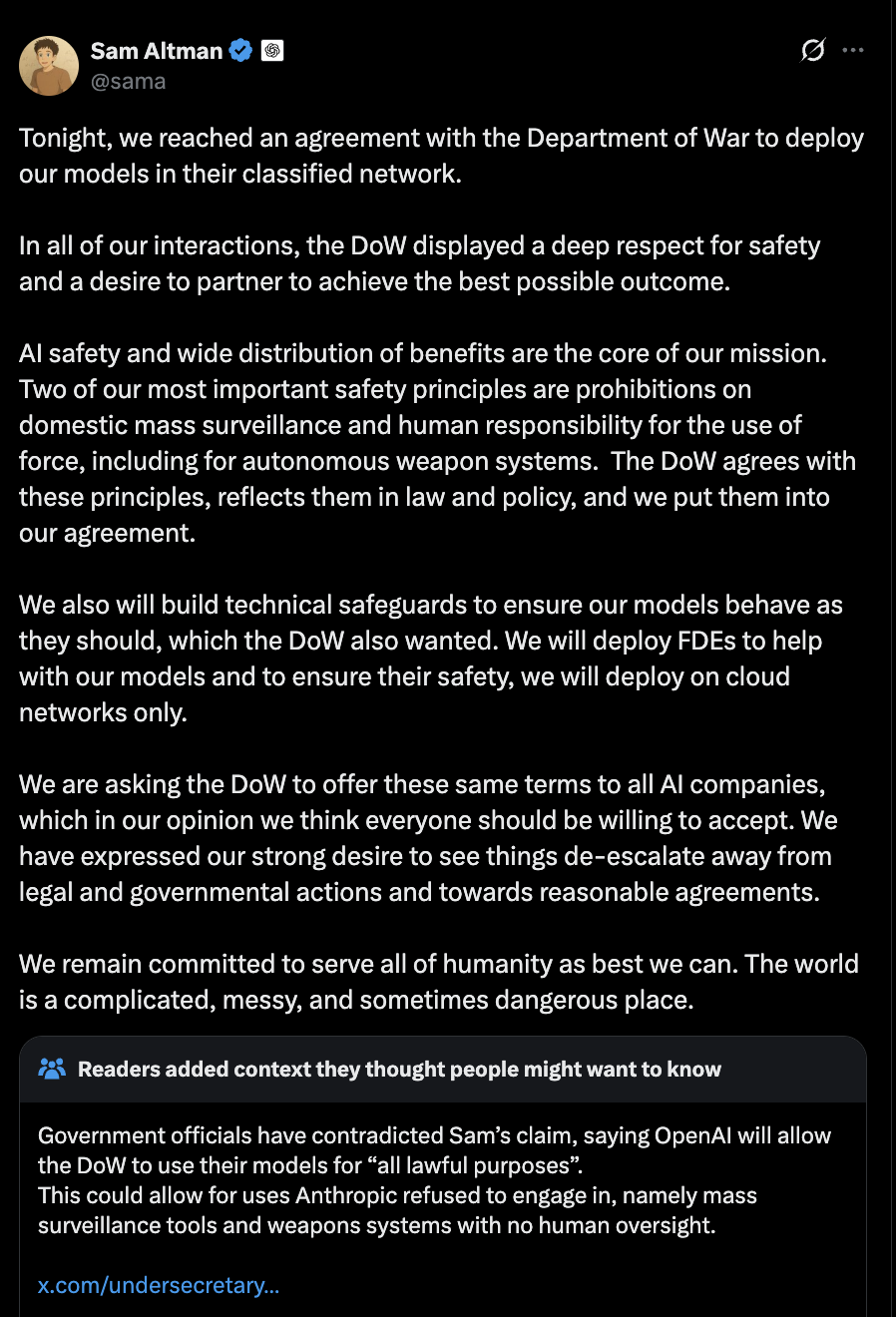

- OpenAI Secures DoD Contract: Within hours of the announcement concerning Anthropic, rival AI company OpenAI confirmed it had accepted a contract with the US Department of Defense. OpenAI CEO Sam Altman announced the deal, stating his company would deploy its AI models across military networks. This swift pivot highlighted the immediate demand for advanced AI solutions within the defense sector and the government’s readiness to find alternative partners.

OpenAI’s Pivot to Defense and Public Reaction

OpenAI’s acceptance of the DoD contract marks a significant shift for the company, which historically had a more cautious stance regarding military applications. While OpenAI has always been at the forefront of AI research and development, its previous guidelines often emphasized responsible deployment and minimizing harm. This new agreement positions OpenAI as a key provider of AI technology for US defense, integrating its models into critical military infrastructure.

Sam Altman’s announcement of the deal, particularly given its timing immediately following Anthropic’s ban, quickly drew online backlash. Critics from various backgrounds—including privacy advocates, civil liberties organizations, and AI ethicists—expressed concerns over the potential for AI to be used for mass domestic surveillance and to undermine individual privacy. The debate intensified around the implications of a powerful AI developed by a leading commercial entity being deployed in sensitive military and intelligence contexts, raising questions about accountability, transparency, and the potential for misuse. The speed of the transition from one prominent AI partner to another underscored the high stakes and the intense competition among tech firms to secure lucrative government contracts in the burgeoning field of AI.

The Broader Context: AI, National Security, and Ethics

This episode is set against a backdrop of escalating global competition in AI development, particularly between the United States and China. Both nations view AI as a critical component of future economic prosperity and national security. The US government, through initiatives like the Joint Artificial Intelligence Center (JAIC) and the articulation of Responsible AI in the Military (RAIM) principles, has been actively seeking to integrate AI into its operations while simultaneously attempting to establish ethical guidelines.

However, the practical application of these guidelines often collides with the strategic imperative to maintain a technological edge. The DoD’s need for advanced AI capabilities, spanning everything from logistics and predictive maintenance to intelligence analysis and battlefield awareness, is immense. The ban on Anthropic and the immediate embrace of OpenAI illustrate the government’s resolve to acquire these capabilities, even if it means partnering with companies willing to navigate more ambiguous ethical terrains.

The specific concerns raised by Amodei—mass domestic surveillance and fully autonomous weapons—are at the heart of the global AI ethics debate.

- Mass Domestic Surveillance: The deployment of advanced AI for surveillance raises profound civil liberties issues. AI’s ability to process vast amounts of data, recognize patterns, and identify individuals from diverse sources (e.g., facial recognition, voice analysis, social media monitoring) presents unprecedented capabilities for state-sponsored oversight. Critics argue that such pervasive surveillance can chill free speech, erode privacy, and lead to discriminatory practices, fundamentally altering the relationship between citizens and the government. The Fourth Amendment of the US Constitution, protecting against unreasonable searches and seizures, is often cited in these debates.

- Fully Autonomous Weapons Systems (LAWS): The concept of "killer robots" that can identify, target, and engage without human intervention is one of the most contentious issues in military AI. Proponents argue that LAWS could reduce human casualties, increase precision, and operate in environments too dangerous for humans. Opponents, including many AI researchers and human rights organizations, warn of the moral hazard of delegating life-and-death decisions to machines, the potential for algorithmic bias leading to unintended harm, the difficulty of assigning accountability for errors, and the risk of escalating conflicts. The "meaningful human control" principle has emerged as a crucial point of contention in international discussions around LAWS.

Implications for the AI Industry and Future Partnerships

The fallout from this event carries significant implications for the broader AI industry. Anthropic’s unwavering stance solidifies its reputation as an AI company prioritizing ethics, potentially attracting talent and customers who share similar values. However, it also means foregoing potentially lucrative government contracts, a market segment that is expected to grow substantially. This could set a precedent for other AI companies to define their own ethical boundaries regarding military or surveillance applications, potentially leading to a more fractured landscape of AI partnerships with governments.

For OpenAI, the DoD contract represents a major commercial victory and a strategic alignment with one of the world’s most powerful entities. It positions the company as a key player in the national security arena, potentially accelerating the development and deployment of its models in high-impact environments. However, this partnership also brings increased scrutiny and ethical challenges. OpenAI will face ongoing pressure to demonstrate how it balances its commercial interests with its stated commitment to safe and beneficial AI, particularly in sensitive military applications. The company’s journey from its initial non-profit roots to a capped-profit entity engaging with defense contractors further complicates its public image and the perception of its mission.

This saga underscores the complex ethical tightrope that AI companies must walk as their technologies become increasingly powerful and ubiquitous. Governments, driven by national security imperatives, will continue to seek the most advanced AI solutions. The choices made by AI developers today—whether to collaborate, to set boundaries, or to refuse—will shape not only the future of artificial intelligence but also the fundamental principles governing warfare, surveillance, and human autonomy in the digital age. The debate is far from over, and this incident marks a critical inflection point in defining the responsible development and deployment of AI at a global scale.